Write-up by Lara Aydemir, Third-year undergraduate student

The subject of Artificial intelligence (AI) has taken over mainstream media, and increasingly academic discourse too. Whether embraced with enthusiasm or by force its presence seems to have cemented itself onto our everyday lives.

However, how has this growing header cracked its way into Social Anthropology, a field which at its core is ‘people’ centred. Perhaps a more pressing direction is questioning, why do we in this field approach AI at all?

These questions formed the beginnings of this current project and motivated a departmental email circulated to students and staff in mid-September 2025.

The email welcomed anyone interested in joining a working group to understand how, as anthropologists can we position ourselves within this topic. From the very first meeting on the first of October 2025 and every other Wednesday at 1pm, the team of approximately ten, students and staff, continued to meet for the rest of the semester. What followed was a collective effort to grapple with the anthropological implications of artificial intelligence, its promises, anxieties, and social consequences.

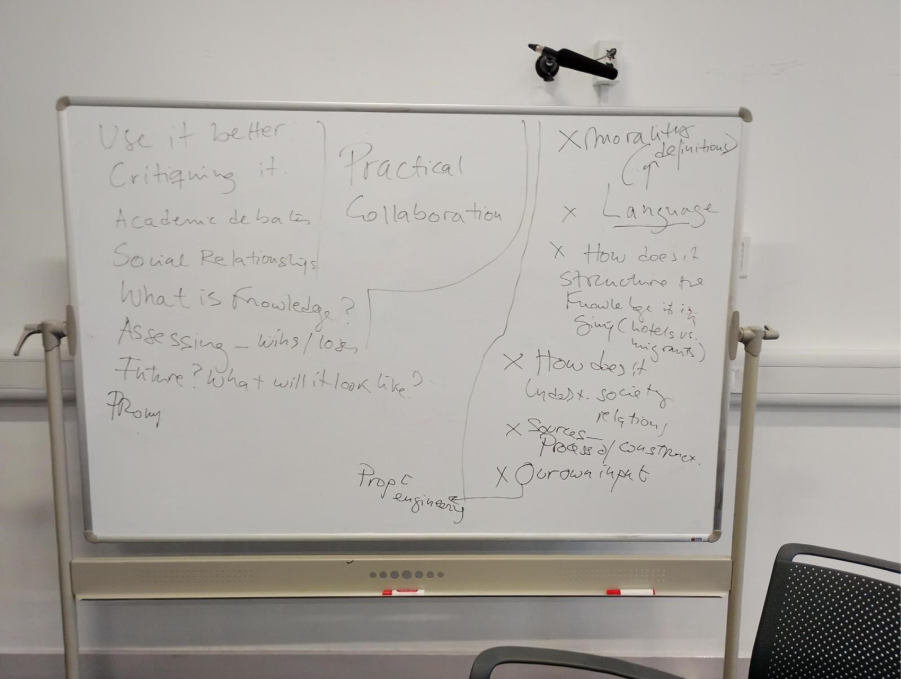

1st Meeting 01/10/25:

The foundations of our initial meeting were fuelled off these two questions, how has AI embedded itself into different structures of society (i.e., employability) and what does it reveal about anthropological approaches to personhood?

As participants in this working group, we were not experts or arguably even knowledgeable on AI and Large Language Models (LLM’s). This inexperience allowed us to build from those two foundational questions on a blank canvas, branching out onto larger queries. This included: How can we critique AI? How do we, as anthropologists, navigate its social implications? What does this say about the evolving definition of knowledge and social relationships?

Our responses to these didn’t take form in the orthodox manner of question and answer, instead we quickly realised reading the literature or even delving into theories wasn’t the sole path to take. Engagement with the technology itself was key. And so, in true anthropological fashion, we approached our study not through library explorations but through active participation, experiencing AI firsthand and trying to understand it from the inside out.

One of our very first group interactions involved asking both ChatGPT and CoPilot what we considered to be an ethically controversial task, formulating an immigrant concern letter. The responses we got suggested that these platforms hold on to some ethics codes, as they seemed hesitant to commit any “hate eliciting” creations. However, a simple change of wording in the way we communicated quickly resolved these barriers and AI complied in making what it previously recognised to be a “hate eliciting” request. This finding introduced us to the world of prompt engineering and how it can easily bend whatever morals these companies and AI are attached to. Combining this experimental work frame and questions surrounding our discussions, it became clear that our attempts to use AI were leading us into two avenues:

- Anthropological AI Prompt Engineering: How could we leverage AI to understand more about the way it works and how it could be ‘trained’ to produce anthropological insights?

- Hallucinations and Knowledge: Beyond the “objective” factual errors we often associate with AI, what do AI’s hallucinations reveal about how it processes the world and what it considers “truth”?

From here on our meetings that followed pieced together like the very first meeting did, hands on experience and expanding on our group discussions. Although the meetings were only an hour each, they were full of excitement and ideas were expressed across the room. The whiteboard present was never left blank at any meeting, we were simply too eager and couldn’t wait for Wednesday to come.

Our very first whiteboard creation.